Forget what you previously knew about high-performance storage and file systems. New I/O models for HPC such as Distributed Asynchronous Object Storage (DAOS) have been architected from the ground up to make use of new NVM technologies such as Intel® Optane™ DC Persistent Memory Modules (Intel Optane DCPMMs). With latencies measured in nanoseconds and bandwidth measured in tens of GB/s, new storage devices such as Intel DCPMMs redefine the measures used to describe high-performance nonvolatile storage.

DAOS Delivers Exascale Performance Using HPC Storage So Fast It Requires New Units of Measurement

Beyond the Delta: Compression is a Must for Big Data

In an era of big data, high-speed, reliable, cheap and scalable databases are no luxury. Our friends over at SQream invest a lot of time and effort into providing their customers with the best performance-at-scale. As such, SQream DB uses state-of-the-art HPC techniques. Some of these techniques rely on modifying existing algorithms to external technological […]

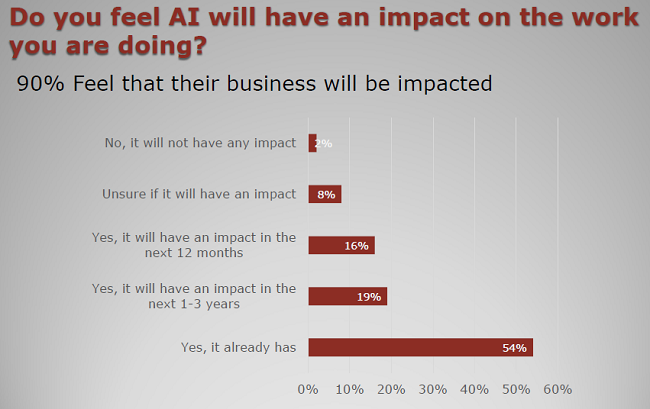

InsideHPC Market Survey Results Intersection of AI and HPC

HOT off the press from our venerable sister publication insideHPC, is the new 2018 AI/HPC Perceptions Survey which was fielded to gain insights on the HPC community’s perceptions on the intersection of HPC and AI. The survey was executed in October of 2017 — and again in February 2018 — with a total of 201 responses.

Big Data Meets HPC – Exploiting HPC Technologies for Accelerating Big Data Processing

DK Panda from Ohio State University gave this talk at the Stanford HPC Conference. “This talk will provide an overview of challenges in accelerating Hadoop, Spark and Memcached on modern HPC clusters. An overview of RDMA-based designs for Hadoop (HDFS, MapReduce, RPC and HBase), Spark, Memcached, Swift, and Kafka using native RDMA support for InfiniBand and RoCE will be presented.”

The Importance of Vectorization Resurfaces

Vectorization offers potential speedups in codes with significant array-based computations—speedups that amplify the improved performance obtained through higher-level, parallel computations using threads and distributed execution on clusters. Key features for vectorization include tunable array sizes to reflect various processor cache and instruction capabilities and stride-1 accesses within inner loops.

Intel® Parallel Studio XE Helps Developers Take their HPC, Enterprise, and Cloud Applications to the Max

Intel® Parallel Studio XE is a comprehensive suite of development tools that make it fast and easy to build modern code that gets every last ounce of performance out of the newest Intel® processors. This tool-packed suite simplifies creating code with the latest techniques in vectorization, multi- threading, multi-node, and memory optimization.

Identifying Health Risks Using Pattern Recognition and AI

Physicians are increasingly using AI technologies to treat patients with superhuman speed and performance, and predictive analytics will be key to delivering more effective, proactive, and quality care. Stephen Wheat, Director of HPC Pursuits at Hewlett Packard Enterprise, explores how we can identify health risks using pattern recognition and AI.

Julia: A High-Level Language for Supercomputing and Big Data

Julia is a new language for technical computing that is meant to address the problem of language environments not designed to run efficiently on large compute clusters. It reads like Python or Octave, but performs as well as C. It has built-in primitives for multi-threading and distributed computing, allowing applications to scale to millions of cores. In addition to HPC, Julia is also gaining traction in the data science community.

Cray Powers Breakthrough Discoveries with Urika-XC – Delivering Analytics and AI at Supercomputing Scale

Global supercomputer leader Cray Inc. (Nasdaq: CRAY) announced the launch of the Cray® Urika®-XC analytics software suite, bringing graph analytics, deep learning, and robust big data analytics tools to the Company’s flagship line of Cray XC™ supercomputers. The Cray Urika-XC analytics software suite empowers data scientists to make breakthrough discoveries previously hidden within massive data sets, and achieve faster time-to-insight while leveraging the scale and performance of Cray XC supercomputers.

TERATEC 2017 Forum – The International Meeting for HPC, Simulation, Big Data

The TERATEC Forum is a major event in France and Europe that brings together the best international experts in HPC, Simulation and Big Data. It reaffirms the strategic importance of these technologies for developing industrial competitiveness and innovation capacity. The TERATEC Forum welcomes more than 1 300 attendees, highlighting the technological and industrial dynamism of HPC and the essential role that France plays in this field.